-

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant Medium

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant Medium

ID: RysKtNuDUL

From:

-

Measure of Agreement | IT (NUIT) | Newcastle University

Measure of Agreement | IT (NUIT) | Newcastle University

ID: Ls5PRQuZdC

From:

-

Interrater the kappa statistic - Biochemia Medica

Interrater the kappa statistic - Biochemia Medica

ID: 9RRynAdviL

From:

-

Cohen's kappa -

Cohen's kappa -

ID: 64HqdPHQKQ

From:

-

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of using a example | Laerd Statistics

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of using a example | Laerd Statistics

ID: lKtnrtcvv2

From:

-

Understanding calculation of the kappa statistic: A measure of inter-observer reliability Semantic Scholar

Understanding calculation of the kappa statistic: A measure of inter-observer reliability Semantic Scholar

ID: s3hviVC0TP

From:

-

statistics Inter-rater agreement (Cohen's Kappa) Stack Overflow

statistics Inter-rater agreement (Cohen's Kappa) Stack Overflow

ID: qXL2Az5NsN

From:

-

ID: Cy8Yz8Lj5r

From:

-

Solved 8. True/False questions - Cohen's Kappa can be | Chegg.com

ID: qH6Ivqd1NI

From:

-

What is Kappa and How It Measure Inter-rater Reliability?

What is Kappa and How It Measure Inter-rater Reliability?

ID: BEpNKJSj4H

From:

-

Performance Cohen's Kappa statistic - The Data

Performance Cohen's Kappa statistic - The Data

ID: jpMqnv3tyT

From:

-

Cohen's Kappa in Best Reference

Cohen's Kappa in Best Reference

ID: 6svUdbFFtj

From:

-

Measure of Agreement across Different | Table

Measure of Agreement across Different | Table

ID: d6wCV7T7td

From:

-

Kappa Value | Reliability -

Kappa Value | Reliability -

ID: d1FfswNNs2

From:

-

What is Kappa and How It Measure Inter-rater Reliability?

What is Kappa and How It Measure Inter-rater Reliability?

ID: Dkcs6U2AbS

From:

-

Cohen's Kappa Real Excel

Cohen's Kappa Real Excel

ID: MUvMce32SS

From:

-

Weighted Cohen's | Real Statistics Using

Weighted Cohen's | Real Statistics Using

ID: Ke9NSzpLjZ

From:

-

Interrater the kappa statistic - Biochemia Medica

Interrater the kappa statistic - Biochemia Medica

ID: 9Fo8ctIoGj

From:

-

Method review of correct - ScienceDirect

Method review of correct - ScienceDirect

ID: XcJjZr22lD

From:

-

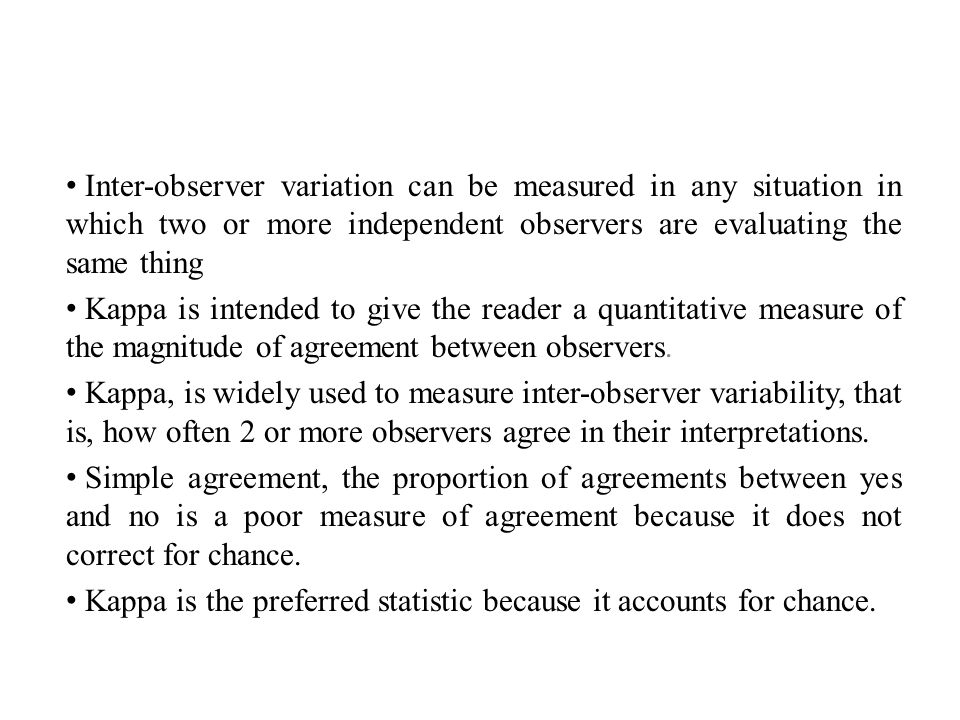

Inter-observer variation can be measured in situation in which two or more independent observers are evaluating the is intended to. - ppt download

Inter-observer variation can be measured in situation in which two or more independent observers are evaluating the is intended to. - ppt download

ID: eyMivWtegq

From:

-

Understanding the calculation of kappa statistic: A measure of inter-observer reliability Mishra - Int J Acad Med

Understanding the calculation of kappa statistic: A measure of inter-observer reliability Mishra - Int J Acad Med

ID: SOMFNrOpUC

From:

-

Inter-rater agreement

Inter-rater agreement

ID: nN6Jjn49l6

From:

-

Table agreement: the kappa statistic. | Semantic Scholar

Table agreement: the kappa statistic. | Semantic Scholar

ID: xJoYtynMdG

From: